Why Choose us

Boosts Your Website Traffic!

We are passionate about our work. Our designers stay ahead of the curve to provide engaging and user-friendly website designs to make your business stand out. Our developers are committed to maintaining the highest web standards so that your site will withstand the test of time. We care about your business, which is why we work with you.

experience

Pay for Qualified Traffic

Ewebot stays ahead of the curve with digital marketing trends. Our success has us leading the pack amongst our competitors with our ability to anticipate change

and innovation.

SEO Analysis

90%

SEO Audit

79%

Optimization

95%

320m

Digital global audience reach

1350

Content pieces produced everyday

89%

Of the audience is under 34 years old

94%

Employee

worldwide

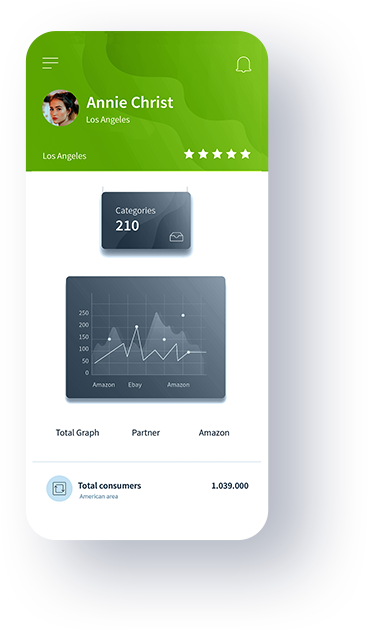

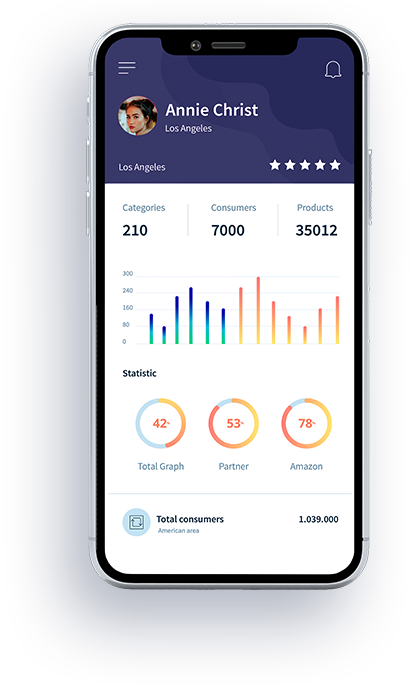

care features

Provide Awesome Service

With Our Tools

Our services

How We Can Help?

Search Engine Optimization

Maecenas elementum sapien in metus placerat finibus. Lorem ipsum dolor sit amet, vix an natum labitur eleif.

Online Media Management

Maecenas elementum sapien in metus placerat finibus. Lorem ipsum dolor sit amet, vix an natum labitur eleif.

Social Media Strategy

Maecenas elementum sapien in metus placerat finibus. Lorem ipsum dolor sit amet, vix an natum labitur eleif.

Real Time and Data

Maecenas elementum sapien in metus placerat finibus. Lorem ipsum dolor sit amet, vix an natum labitur eleif.

Penalty Recovery

Maecenas elementum sapien in metus placerat finibus. Lorem ipsum dolor sit amet, vix an natum labitur eleif.

Reporting & Analysis

Maecenas elementum sapien in metus placerat finibus. Lorem ipsum dolor sit amet, vix an natum labitur eleif.

featured Projects

Our Case Studies

Why Choose Us

What We Offer

Get Free SEO Analysis?

Ne summo dictas pertinacia nam. Illum cetero vocent ei vim, case regione signiferumque vim te.

Blog

Latest News

Ad nec unum copiosae. Sea ex everti labores, ad option iuvaret qui. Id quo esse nusquam. Eam iriure diceret oporteat.

Testimonials

What Our Clients Say

Ewebot stays ahead of the curve with digital marketing trends.

Design is a way of life, a point of view. It involves the whole complex of visual commun ications: talen.t, creative ability manual skill.

Denis Robinson

Customer

Design is a way of life, a point of view. It involves the whole complex of visual commun ications: talent, creative ability and technical knowledge.

Silviia Garden

Customer

Design is a way of life, a point of view. It involves the whole complex of visual commun ications: talent, creative ability and technical knowledge.

Tommy Dents

Customer